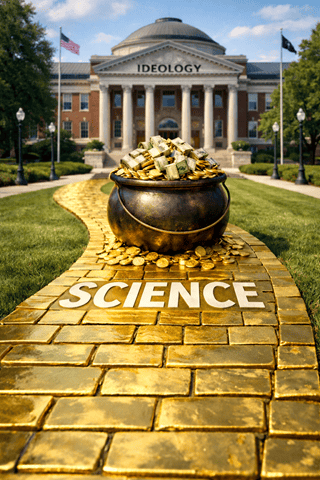

The Myth Of Neutral Science Just Cracked

For years, we’ve been lectured to “trust the science” as if science were some sterile, ideology-free oracle floating above human bias. Turns out that’s a comforting fairy tale. When 158 scientists were handed the same data and asked the same question, their conclusions didn’t magically converge on the truth. They split along political lines. Same numbers. Same dataset. Different “realities.”

If that doesn’t make you angry, it should.

This wasn’t some fringe blog experiment. This was a serious, peer-reviewed analysis showing that political ideology can predict scientific outcomes better than the data itself. In other words, the conclusion often shows up before the analysis even begins.

Same Data, Wildly Different “Truths”

Seventy-one research teams analyzed identical international survey data to answer a straightforward question: Does immigration affect public support for social welfare programs? They ran more than 1,200 statistical models. The results were all over the map.

Some teams found immigration destroyed support for welfare. Others claimed it boosted social cohesion. Many found no effect at all. That level of chaos isn’t healthy scientific disagreement. It’s a warning flare.

And the pattern was unmistakable. Researchers who favored relaxed immigration policies tended to find positive effects. Researchers who favored tougher immigration laws tended to find negative results. This wasn’t about math errors. It was about choices—what variables to include, how to measure immigration, which years to study, which countries “count.”

That’s where ideology quietly slipped its hand onto the scale.

The Hidden Power Of Researcher Choice

Here’s the dirty secret of complex data analysis: there are dozens of defensible ways to slice the numbers. Which definition you choose, which controls you add, which outliers you drop—each decision nudges the outcome.

The study found that just five design decisions accounted for about two-thirds of the ideological split in the results. That’s not accidental noise. That’s structured bias.

Nobody had to fake data. Nobody had to lie. They just made “reasonable” choices that happened to align with how they already believed the world works. That’s confirmation bias wearing a lab coat.

Extremes Perform Worse, But Get Louder

There’s another inconvenient finding that rarely makes headlines. Teams with stronger ideological views—on either side—produced lower-quality research, according to blind peer reviews. Moderates, the ones without a political axe to grind, did better work.

Yet guess which studies get amplified in media, policy debates, and activist talking points? Not the careful, boring, moderate ones. The loud, certainty-soaked conclusions get the microphone, especially when they flatter powerful interests or fashionable narratives.

“Follow The Science” Has Become A Power Play

This is why “follow the science” has started to sound less like humility and more like a demand for obedience. When science is filtered through funding incentives, publication pressure, career risks, and ideological conformity, it ceases to be a neutral referee and becomes a political weapon.

That doesn’t mean science is worthless. It means scientists are human. They want approval. They want grants. They want to be on the “right side” of history as defined by their peers. And when the system rewards certain conclusions, don’t be shocked when those conclusions keep appearing.

The Reproducibility Crisis Is A Trust Crisis

This study lands squarely in the middle of a broader reproducibility crisis. If equally qualified experts can produce opposite answers from the same data, the problem isn’t the public’s skepticism. The problem is the process.

Transparency, open data, preregistered methods, and genuine ideological diversity aren’t optional extras. They’re the only way to keep science from turning into polished advocacy dressed up as objectivity.

Bottom Line

Science doesn’t fail because people question it. Science fails when it pretends humans aren’t involved. If political beliefs can predict outcomes before the analysis even starts, then blind trust isn’t rational—it’s reckless. The real question isn’t whether you “follow the science.” It’s whether the science is following evidence, or chasing the politics that pay.

We are so screwed.

— Steve