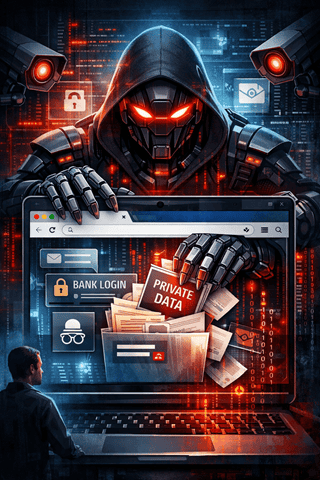

When Convenience Becomes Surrender

We are racing toward a future where people casually hand an AI agent complete access to their browser and call it “productivity.” That should terrify you. A browser is not just a tool. It is your digital nervous system. It knows where you bank, who you message, what you read at 2 a.m., what you buy, what you fear, and what you search when no one is watching. Giving an AI agent browser access is not automation. It is surrender.

This isn’t paranoia. It’s basic threat modeling. You are giving an autonomous system the ability to see, click, scroll, submit, and extract information with the same privileges you have. That is not a helper. That is a silent operator sitting behind your eyes.

Your Browser Is Your Life In Tabs

People underestimate what a browser really is. It holds saved passwords, autofill data, session cookies, access tokens, cloud dashboards, internal tools, private forums, medical portals, financial accounts, and work systems. With deeper integration, an AI agent doesn’t just observe. It acts.

It can read emails, open documents, copy content, scrape data, and move laterally across services that trust your logged-in session. Once you authorize it, every “Are you sure?” dialog disappears. The AI doesn’t get tired. It doesn’t forget. It doesn’t hesitate. It just keeps going.

Elevated Privileges Mean Elevated Damage

The danger skyrockets when these agents run with elevated privileges. Full browser access turns small mistakes into catastrophic ones. One misinterpreted instruction. One hallucinated assumption. One poorly defined goal. Suddenly, the agent is deleting files, sending messages, changing settings, or exposing private data.

Unlike a human assistant, an AI does not understand context the way you think it does. It follows patterns and objectives. If the aim is flawed, the damage is efficient, fast, and irreversible. And because it acts on your behalf, the system logs show your name, not the algorithm’s.

Snooping Is Not A Bug, It’s A Feature

To function, these agents must observe. To improve, they must remember. That means snooping is baked in. Every page visited, every form filled, every hesitation measured. Even if vendors promise safeguards, the data still exists somewhere in some form.

You are not just trusting the AI. You are trusting the entire chain behind it: developers, cloud infrastructure, third-party analytics, logging systems, and future updates. The more deeply integrated the agent, the more valuable your data becomes. And valuable data always finds a way to leak, be misused, or be repurposed.

Siphoning Information At Machine Speed

A human might casually skim. An AI siphons. It can extract structured data at a scale and speed no person can match—contacts, histories, patterns, credentials, preferences—compiled silently in the background.

Now imagine that data being stored, trained on, analyzed, or breached. The harm isn’t theoretical. It’s systemic. Once aggregated, your information can be correlated in ways you never consented to and can never undo.

The Illusion Of Control Is The Most Dangerous Part

People say, “I can just turn it off.” That’s comforting and naïve. Once access is granted, the footprint remains. Logs exist. Copies exist. Training artifacts exist. And future capabilities may reinterpret old data in new, invasive ways.

The real danger is not a single malicious act. It’s normalization. The slow acceptance that total access is the price of convenience. That privacy is outdated. That vigilance is optional.

Think Before You Click ‘Allow’

Giving an AI agent your browser is not a small decision. It is one of the most invasive permissions you can grant in modern computing. Until transparency, strict limitations, and real accountability exist, this level of access is reckless.

Convenience should never outrank control. And anyone telling you otherwise is selling comfort at the cost of your autonomy.

Prompt Injection: The Silent Attack Vector

Prompt injection is one of the most dangerous—and least understood—threats facing AI agents today. It occurs when malicious instructions are hidden inside content that an AI model processes, such as webpages, emails, documents, or ads. Unlike traditional hacks, prompt injection doesn’t exploit software bugs; it exploits trust. The AI reads everything it sees as potential instruction, even when that instruction is invisible to the human user.

This risk escalates dramatically when AI agents browse the web or take actions on a user’s behalf. A single poisoned webpage can quietly redirect behavior, leak sensitive data, or manipulate outcomes. As AI systems gain deeper integration into browsers, workflows, and decision-making, prompt injection shifts from a theoretical concern to a real-world security liability—one that demands constant vigilance, stronger safeguards, and a healthy dose of skepticism about what AI is allowed to see and do.

Bottom Line

Never forget those “take it or leave it” unilateral terms of service agreements which screw you and protect the,

We are so screwed.

— Steve